Search results for “math”

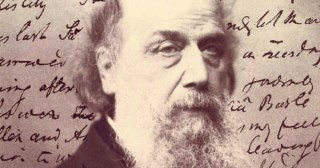

The Ethics of Belief: The Great English Mathematician and Philosopher William Kingdon Clifford on the Discipline of Doubt and How We Can Trust a Truth

“It is wrong always, everywhere, and for anyone, to believe anything upon insufficient evidence.”

The Trailblazing 18th-Century French Mathematician Émilie du Châtelet on Jealousy and the Metaphysics of Love

“It is the privilege of affection to see a friend in all the situations of his soul.”

The Invention of Zero: How Ancient Mesopotamia Created the Mathematical Concept of Nought and Ancient India Gave It Symbolic Form

“If you look at zero you see nothing; but look through it and you will see the world.”

Truth Beyond Logic and Time Beyond Clocks: Janna Levin on the Vienna Circle and How Mathematician Kurt Gödel Shaped the Modern Mind

“The past does not exist except as a threadbare fragment in the weaker minds of the many.”

Probability Theory Pioneer Mark Kac on the Duality of the Creative Life, the Singular Enchantment of Mathematics, and the Two Types of Geniuses

“Creative people live in two worlds. One is the ordinary world which they share with others and in which they are not in any special way set apart from their fellow men. The other is private and it is in this world that the creative acts take place.”

Mathematician Lillian Lieber on Infinity, Art, Science, the Meaning of Freedom, and What It Takes to Be a Finite But Complete Human Being

Mathematics and poetry converge in an ode to the “sweet reasonableness” at the heart of a psychologically balanced character.

How the French Mathematician Sophie Germain Paved the Way for Women in Science and Endeavored to Save Gauss’s Life

“The taste for the abstract sciences in general and, above all, for the mysteries of numbers, is very rare… since the charms of this sublime science in all their beauty reveal themselves only to those who have the courage to fathom them.”

Trailblazing 18th-Century Mathematician Émilie du Châtelet, Who Popularized Newton, on Gender in Science and the Nature of Genius

“One must know what one wants to be. In the latter endeavors irresolution produces false steps, and in the life of the mind confused ideas.”

Hidden Figures: The Untold Story of the Black Women Mathematicians Who Powered Early Space Exploration

A heartening testament to “the triumph of meritocracy” and to the idea that “each of us should be allowed to rise as far as our talent and hard work can take us.”

ABOUT

CONTACT

SUPPORT

SUBSCRIBE

Newsletter

RSS

CONNECT

Facebook

Twitter

Instagram

Tumblr